Both versions offer similar functionality.įor more information, see CudaLaunch for iOS and Android. CudaLaunch for mobile is available for iOS and Android devices via the Apple App Store or Google Play Store. CudaLaunch for iOS and AndroidĬudaLaunch for mobile provides secure remote access to your organization's resources from mobile devices. CudaLaunch is available for Windows and macOS via the Microsoft 10 App Store and the macOS App Store.įor more information, see CudaLaunch for Windows and macOS. Remote users can access firewall services and features and, with administrative permissions, enable or disable dynamic firewall rules. CudaLaunch for Windows and macOSĬudaLaunch offers secure access to resources made available on the CloudGen Firewall. For a list of supported devices, see Supported Browsers, Devices and Operating Systems. The CudaLaunch portal's responsive interface is compatible for both desktop and mobile devices. CudaLaunch also enables administrators to manage dynamic firewall rules and integrates with the Barracuda VPN Client to connect via client-to-site VPN. In addition, CudaLaunch enables administrators to manage dynamic firewall rules and also integrates with the Barracuda VPN Client to connect via client-to-site VPN. Plus, the CUDA runtime version I’ve got on the TK1 is 6.5.CudaLaunch is a Windows, macOS, iOS, and Android application that provides secure access to your organization's applications and data from remote locations and a variety of devices. CudaLaunch is a Windows, macOS, iOS, and Android application that provides secure access to your organization's applications and data from remote locations and a variety of devices. The only similar topic on the forums I could find online was but I see the run-time spikes when not profiling too. However, I still don’t know what the problem is.

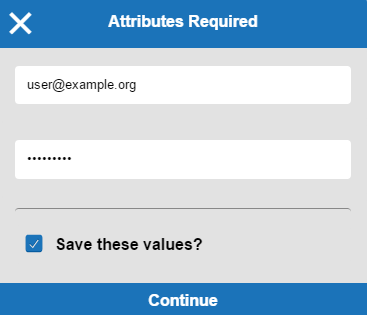

A favorite feature provides end users with quick access to frequently used apps and VPN client connections. I’ve now added calls to _threadfence_system() at the end of all my kernels and create the streams with cudaStreamDefault rather than with the cudaStreamNonBlocking flag. CudaLaunch was designed from the ground up to create an undeniably convenient tool that has an innovative swipe interface and rich, app-based end user experience. I found on rare occasions, cudaLaunch would stall for up to 11ms! Strangely, in this arrangement, the stall moved to cudaLaunch. CPU then waits for each of the three kernels with cudaStreamSynchronize and then proceeds to access the shared memory to which the three have written out to.three kernels get submitted each to its own stream, each with a cudaStreamWaitEvent dependency on stream 0 being done with the first kernel.one kernel does the first stage of processing in stream 0.They all need to wait for data output from another kernel first, though. Three of the kernels I need to run can be run concurrently. I then rearranged my processing to make use of streams.

The same would happen for me if I used cudaEventSynchronize. I found on rare occasions, cudaDeviceSynchronize would stall for up to 4ms randomly. Otherwise, I see incorrect contents when accessing the memory from the CPU after the GPU has written out to it.Īt first, I had a simple arrangement where all kernels where executed in the default stream, and just before the CPU was to access the shared memory with the results output from the GPU, I’d call cudaDeviceSynchronize. My observation is I need to call one of the CUDA API synchronisation functions so that the CPU / GPU shared memory gets synced properly. The GPU writes out its results to the shared memory, and then I access them from the CPU. I make use of pinned CPU / GPU shared memory when processing, but the majority of the load is on the GPU. I measure the per-frame run-time as below: CHECK_CUDA(cudaEventRecord(set_up.startEvent, 0)) ĬHECK_CUDA(cudaEventRecord(set_up.stopEvent, 0)) ĬHECK_CUDA(cudaEventSynchronize(set_up.stopEvent)) I’ve collected the data over sufficiently long sequences of frames. The data I’ve got suggests nothing wrong with the kernel execution times, they fluctuate by 0.1-0.2ms tops. I’ve profiled with NVVP, collecting both kernel execution times and CUDA API profiling information. The problem manifests itself as random spikes in run-time.

I’m seeing an obscure problem when running CUDA compute on the Jetson TK1 (GK20A).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed