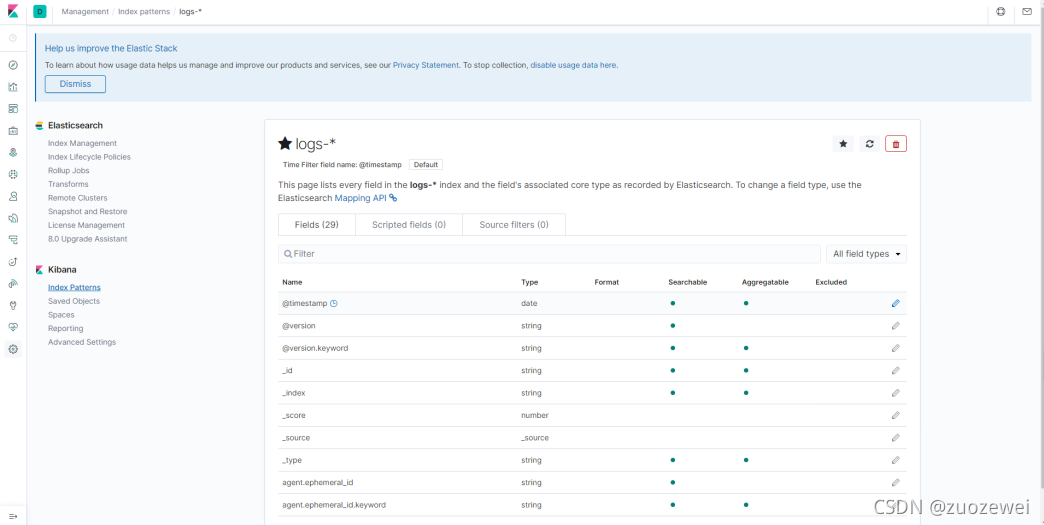

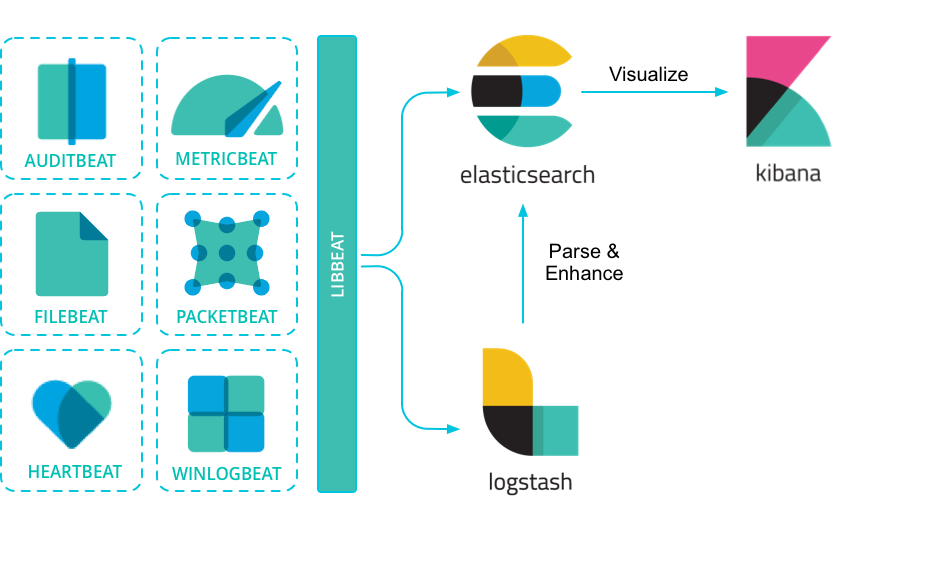

I'm trying to follow the "Parsing Logs with Logstash" ( Tutorial), and I am having trouble when I try to connect my pipeline to Elasticsearch. I am using Ubuntu version 20.04 and I will install ELK on it. Setup & configure ElasticSearch and Kibana on Linux Ubuntu Step 1: Update & Install Dependencies. You can test your configuration with a dry run.Ġ 1 * * * user /usr/local/bin/curator /home/user/.curator/curator_action.yml > /var/log/curator.Hi. I will install Logstash, ES and Kibana on the Linux server and install file beats on the window OS (server publish a website). It works right away, you only need to add the configuration file to /home/user/.elasticsearch/ and change the disable_action flag to False. Here is the configuration of the action file which deletes all indices older than 45 days. Elastic comes with another tool called Curator.įollow this tutorial to install it, for a newer version of Elasticsearch you need to install it via pip, otherwise,Ĭurator will not be compatible with Elasticsearch. Logstash was originally developed by Jordan Sissel to handle the streaming of a large amount of log data from multiple sources, and after Sissel joined the Elastic team (then called Elasticsearch), Logstash evolved from a standalone tool to an integral part of the ELK Stack (Elasticsearch, Logstash, Kibana). Data retentionīased on our use case, we should set the time period for which the logs are kept. Airflow supports Elasticsearch as a remote logging destination but this feature is slightly different compared to other remote logging options such as. Make sure it runs at startup after the machine is rebooted. Photo by Erlend Ekseth on Unsplash Overview. If the push from Filebeat to Logstash is successful, we can turn off the command and run it as a service. 'Payment transaction finished with status= , INFO - messages that carry important information, e.g. Elastic Stack Beats filebeat jclose (Jason) March 17, 2016, 1:54pm 1 If I have several different log files in a directory, and I'm wanting to forward them to logstash for grok'ing and buffering, and then to downstream Elasticsearch.For example, 'Task has started', 'Task has ended in 5.4 seconds', 'Email for user id=55 was sent' DEBUG - messages that can help us track the flow of the algorithm, but are not important for anything else than troubleshooting.My usage of the logging levels is as follows: There are five levels that can be used for log messages. Elasticsearch is a powerful open source search and analytics engine that makes data easy to explore. Log level helps us identify the severity of the message and makes it easier to navigate in the log output. getLogger ( _name_ ) Logging levelsĪnother part of the log structure is the log level. The variable _name_ will be translated into the name of the module that will also appear in the final log messages. 'false' disables any extra processing necessary for. By using a combination of modules and tokenizers, you can eliminate the need for maintaining resource (and subsequently capital) intensive processors such as Logstash. We have seen how to use Filebeat to collect, parse and ship Tomcat logs directly to Elasticsearch. 'true' enforces ordering on the pipeline and prevent logstash from starting if there are multiple workers. Figure 5: Parsed Access Log in Elasticsearch Conclusion. 'auto' automatically enables ordering if the pipeline.workers setting is also set to 1, and disables otherwise. In order to start logging, just add the following lines at the top of your file. Options are 'auto' (the default), 'true' or 'false'. It is also a good practice to use logging messages in the local environment to speed up the development, enabling these messages to stay there for production use. But that common practice seems redundant here. Note: you could also add ElasticSearch Logstash to this design, but putting that in between FileBeat and Logstash. Having reasonable logging messages in the production helped me discover several non-trivial bugs that would otherwise be undiscoverable. Using JSON is what gives ElasticSearch the ability to make it easier to query and analyze such logs. We will also briefly cover all preceding steps, such as the reasoning behind logging, configuring logging in Django and installing ELK stack. The main aim of this article is to establish a connection between our Django server and ELK stack (Elasticsearch, Kibana, Logstash) using another tool provided by Elastic - Filebeat. In this tutorial, we are going to learn how to push application logs from our Django application to Elasticsearch storage and have the ability to display it in a readable way in Kibana web tool.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed